User perspectives on identity and safety in social VR

Prof. Dr. Matthias Quent

Professor of Sociology and chairman of the Institute for Democratic Culture at Magdeburg-Stendal University of Applied Sciences. He also heads the Immersive Democracy project.

Sara Lisa Vogl

Cofounder of the NPO Women in immersive Technologies Europe(WIIT), and member of the World Economic Forum’s Global Future Council for VR/AR Sara is collaboratively constructing and exploring VR experiences since 2013. A background in communication arts & interactive media and in love with the idea of new worlds, Sara is on a mission to go beyond the status quo of what immersive virtual realities are and explore their diverse potentials for the future. Website

Background and significance

Virtual worlds offer unique opportunities for individuals to interact, collaborate, and form relationships in digital spaces. Virtual online spaces have gained significant prominence in the last two decades, through advancements in technologies like virtual reality (VR), augmented reality (AR), artificial intelligence (AI), and general computing power. These digital environments provide individuals with immersive and interactive spaces and scenarios where they can engage in social interactions, explore diverse identities, and experience various forms of relationships. The increasing relevance of social life within virtual worlds presents a unique opportunity to examine the implications and benefits for individuals who spend substantial amounts of time in these online social spaces.

Virtual worlds are computer-simulated environments where users can interact with each other and with the digital environment itself. These digital spaces can take the form of fully immersive virtual reality (VR) environments or less-immersive platforms accessed through computer screens or mobile devices like smartphones. Virtual social spaces offer participants a range of experiences, from gaming and socializing to educational and professional interactions. In this study we are focusing on fully immersive head mounted display driven social interaction.

The distinguishing features of virtual worlds include:

Immersive Environments:

Through VR technology, users can engage with three-dimensional environments that simulate real-world experiences or fantastical settings. Virtual worlds can have the power to provide users with a sense of immersion and presence, blurring the line between the physical and digital realms. The interactive nature of VR and the direct feedback of avatar embodiment heightens the immersion in said environments. Higher levels of immersion enhance the feeling of being present in the virtual world, creating more engaging and rewarding experiences in return.

Avatars:

Avatars are digital representations of users within the virtual worlds. They serve as the user’s virtual personas, allowing them to navigate and interact with the environment and other participants. Avatars can be customized to reflect people’s preferences and can range from human-like representations to imaginative creatures or objects. Avatars play a crucial role in self-expression and identity exploration within virtual worlds. The effect of avatars on people is fundamentally different if experienced in a fully immersed state inside VR or on a 2D screen like a mobile phone.

Social Networking Capabilities:

Virtual worlds experienced through VR foster social connections by enabling people to interact and communicate with each other very similar to real life. The immersive nature of VR combined with the real-time embodiment of full-body-tracked Avatars enables social interactions that include body language and can facilitate a similar presence like real life social interactions. The platforms usually all provide features such as voice chat, and text chat, allowing users to engage in real time conversations and support the formation of communities, friendships, and professional networks.

The significance of virtual worlds in today’s society is multifaceted:

Social Connection and Interaction:

Virtual worlds offer individuals a way to connect with others, independent of physical distance or geographical barriers. They provide a platform for socialization, enabling people to meet, socialize, and collaborate with others who share similar interests or goals. Virtual worlds have become important social spaces for forming relationships, creating communities, and fostering a sense of belonging for individuals.

Entertainment and Gaming:

Virtual worlds offer a wide range of gaming experiences, from massive multiplayer online games (MMOs) to virtual reality (VR) gaming. VR has profoundly impacted the gaming industry, providing immersive and interactive environments where gamers can literally slip into the shoes of the main character. Virtual worlds have become popular spaces for gamers to explore and socialize or compete and engage in cooperative gameplay with others.

Education and Training:

Virtual worlds and scenarios are widely used in education and training, offering innovative and immersive learning experiences. They provide platforms for simulations, role-playing scenarios, and virtual classrooms, enabling learners to acquire new knowledge and skills in an engaging and interactive way. Virtual worlds can facilitate experiential learning, professional development, and collaborative problem-solving between a wide variety of other factors that might enhance the way we learn and train.

Creative Expression and Commerce:

Virtual worlds serve as spaces for creative expression, allowing users to design and build virtual objects, environments, and experiences. Users can engage in virtual commerce, sell virtual goods and services, and monetize their creations. Virtual economies like in Second Life have emerged, where users can buy, sell, and trade virtual assets, fostering a vibrant digital marketplace that is independent from users’ physical locations or socio-cultural backgrounds.

Research and Innovation:

Virtual worlds have become important research tools for various disciplines, including psychology, sociology, medicine and architecture through the infinite possibilities of simulation.

In summary, virtual worlds offer immersive environments, customizable avatars, and social networking capabilities, providing opportunities for social connection, entertainment, education, creativity, and research. As technology continues to advance, virtual worlds are expected to play an increasingly significant role in shaping how we interact, learn, and experience digital spaces.

Virtual Reality (VR) has evolved in recent years from a niche technology to a widely adopted platform with numerous applications. The modern use of the term VR goes back to the computer scientist Jaron Lanier, who established it in the 1980s (cf. LaVelle 2020:5). According to Steven M. LaValle „The most important idea of VR is that the user’s perception of reality has been altered through engineering, rather than whether the environment they believe they are in seems more ‘real ‘ or ‘virtual’. A perceptual illusion has been engineered.“ (ibid. 2020:6) This immersive technology enables users to enter worlds vastly different from their physical surroundings, opening new dimensions of experience and interaction. Applications of VR systems range from video games, immersive cinema, telepresence (like in Google’s street view) to use in educational areas (cf. ibid. 2020: 9 – 22).

Among the most significant and increasingly relevant applications of VR systems are Social VR platforms. These platforms “[…] provide virtual environments that users can visit and move around in, acting as a public space to meet other individuals to interact with and communicate with. The interest in social VR platforms is steadily growing, and social VR platforms such as VRChat are widely popular among VR users. These digital environments are visited by users through the use of an avatar that acts as a digital body.“ (Stockselius 2023:1).

A leading example of Social VR platforms, and the focus of this article, is VRChat—a widely popular platform that enables users to interact. “VRChat is considered one of the most popular social VR platforms, with the number of users rapidly increasing over the COVID-19 pandemic to 22.000 users per day and over 4 million total users. VRChat was created in 2014 and has raised over $95.2 million in investment, however, remains free for users to play. […] VRChat is less focused on gameplay and more centered around creativity and social interaction.” (Deighan et al. 2023:2).

Arne Vogelgesang highlights that „Social VR as a practice can be understood as embodied social role-playing in a system of networked and bounded virtual 3D spaces inhabited by avatars connected to human users. Due to technical limitations, a single space on a social VR platform can currently usually accommodate no more than 50 people at a time, which structurally favours the dynamic formation and dissolution of social groups and their localization” (Vogelgesang 2024: 1-2).

While VRChat offers vast opportunities for creativity and social interaction, it also brings significant challenges. Sabri et al. (2023) discuss these issues in their study ‘Challenges of Moderating Social Virtual Reality’ the difficulties associated with moderating such platforms. The anonymity and deep immersion provided by VR can foster both positive and negative behaviors, posing a challenge for moderators who must ensure a safe and enjoyable environment without stifling user freedom and creativity. These challenges range from combating harassment and abuse to enforcing age restrictions and ensuring privacy.

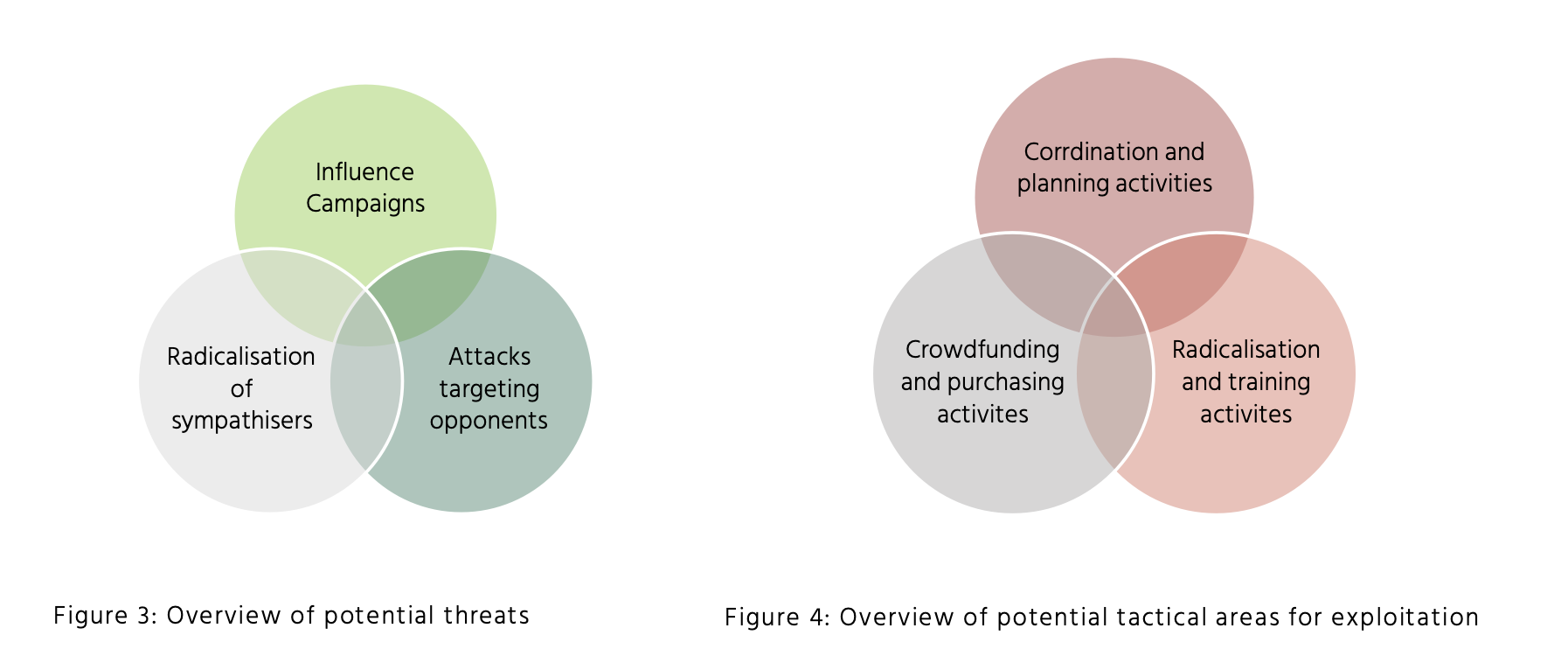

These challenges are not unique to VRChat but reflect broader societal concerns about immersive technologies. Matthias Quent (2023) highlights in his analysis of democratic cultures in the ‘next Internet’ that while VR offers significant opportunities at an individual level, it also confronts major social challenges especially at a social level. (cf. Quent 2023: 30; English translation). He sees immersive technologies like VR as offering significant opportunities, such as supporting psychotherapy for anxiety and depression, fostering empathy and inclusion through perspective-taking, and enabling innovative educational experiences. However, they also pose risks, as seen in platforms like Roblox, where user-generated content can promote discrimination, far-right ideologies, and group-focused enmity, violating community standards. (cf. Quent 2023: 38; English translation).

The societal risks identified by Quent are further underscored by a recent study on the metaverse, which analyzed the experiences of U.S. adolescents. Based on a nationally representative survey of 5,005 US adolescents aged 13 to 17 the researchers found out that 44% of youth reported experiencing hate speech, while 37% faced bullying and 35% harassment in the metaverse. Sexual harassment (18.8%) and grooming or predatory behavior (18.1%) were significant concerns, with girls disproportionately affected. (cf. Hinduja/ Patchin 2024: 6).

These findings highlight the need for better policies, content moderation, and educational efforts to mitigate the risks associated with the metaverse for adolescents. Given these findings, the need for improved protection mechanisms becomes clear. This topic, along with user perspectives, is explored further in the following interviews.

What is social VR & VR Chat?

Social VR refers to virtual reality platforms that emphasize interpersonal interactions, enabling users to meet, communicate, and engage in shared activities within virtual environments. One of the most popular and pioneering platforms in this domain is VRChat. Launched in 2017, VRChat offers a diverse array of user-generated worlds and avatars, allowing participants to interact in an almost limitless range of settings. Beyond real time voice and text-based conversations, users can partake in activities such as games, karaoke, movie nights, and educational sessions. As of 2023, VRChat has millions of registered users, exemplifying the growing appeal of virtual spaces for social connection and collaborative experiences. Users can access the platform with a PC, with VR glasses and with a mobile app on smartphones (beta version). High-quality VR experiences are based on high hardware requirements, in which VR glasses are connected to powerful computers. The technology is particularly useful for displaying high-quality, high-resolution avatars. Visible levels of detail can be reduced on the software side via settings. Fully immersive experiences require expensive hardware.

Reference to the Metaverse

The “metaverse” refers to a collective virtual shared space. It’s a vision of a fully immersive digital universe that people can inhabit, work, play, and socialize in, akin to a massive, interconnected digital society.

Platforms like VRChat and other social VR experiences serve as early indicators or prototypes of what the metaverse might look like in the future. They allow users to transcend physical limitations, enabling them to interact with others in a virtual universe with diverse worlds and activities. In VRChat, for instance, the idea of user-generated content—wherein participants can create their own avatars, design their worlds, and curate their experiences—aligns closely with the broader metaverse vision. Current social VR platforms pave the way for a comprehensive and integrated digital ecosystem, where physical and virtual worlds become increasingly interconnected. As technologies advance and more platforms emerge, the vision of a unified metaverse becomes progressively tangible, with current social VR platforms serving as its foundational building blocks and environments for learning and experimentation.

Research objectives and questions

As part of our experimental research, we wanted to find out why people use social VR, what experiences they have had there, particularly with regard to aspects of identity, safety and hate speech, and how they protect themselves from harassment. Questions asked in the interviews conducted inside the social vr platform VRChat included:

- Which gender do you represent in VR? Does this differ from your real-life gender?

- Why do you use VR?

- What makes you feel safe in VR?

- What makes you feel unsafe, if anything?

- Have you experienced harassment or hate speech in VR?

- If so, how often does it happen? How does it happen? How have these experiences affected you, perhaps compared to similar expressions on social media or in the real world? How do you deal with it? What helps you deal with it?

- What ways do you know to protect yourself from harassment or hate in VR? Which of them have you already used? How often?

- What other protections would you like or need?

- How many avatars are on your block list? Are they usually strangers or known people?

- What are the consequences of blocking? Are there any negative consequences or irritations as a result?

- How does it feel to get blocked?

- Do you perceive political statements in VR? If so, which ones?

- Have you noticed extremist or hateful activities?

- Do you think criminal activities in VR should be prosecuted by police and courts?

- What should policy makers, regulators or designers know about VR experiences like VRChat?

Research design and data collection methods

To approach the questions, we conducted four qualitative guided interviews as avatars in VRChat in autumn 2023. The anonymised participants come from different countries and were recruited as a self-selected sample from the virtual network of VR designer Sara Lisa Vogl. The interviews were transcribed, coded according to the key questions and analyzed. The results of the explorative pilot research are limited and cannot be generalized. However, they provide first-hand insights into user behavior and experiences in social VR and thus fill an empirical gap in the primarily theoretical and technological research literature. Further research with more interviewees in virtual worlds can build on this.

The methodology employed involves providing insights into social interaction behavior and interaction design mechanisms within the metaverse, focusing on subjective and qualitative measures to capture the experiences of individuals interacting in different capacities within social online spaces. By employing observation and analysis of these interactions, I am aiming to interpret the measured insights and establish relationships between the observed behaviors and the potential mechanisms that contribute to the described outcomes.

The results of this data collection will provide insights into concepts that should be evaluated for the design of virtual worlds, facilitating an enhanced understanding around the potential impact of social VR on individuals’ subjective experiences and their resulting perceived abilities in real life contexts.

Through the unique ability of VR to change appearances and virtual bodies completely the interviewees have the opportunity to show up in various identities that are unrelated to their real-life bodies and identities regarding age, gender and abilities. A common trend observed in VRChat is the preference of male-identifying individuals to wear female anime-like Avatars.

Findings from the interviews

VR Usage of interviewees

To gain insights into the patterns and diversity of VR usage among our participants, and how they relate to their identity and safety issues inside and outside of VR we asked interviewees since when and for what purpose they have been using social VR.

Duration of usage between participants is 2-5 years in social VR, mainly VRChat.

For some of the participants the experience of social VR is an alternative to real life social interaction while still performing the desired shared activities and feeling connected to friends. Especially for self-described introverts the meetings in social VR have the benefit of added layers of controls over the environment. People can adjust the amount of users visible to them as well as colors and audio of the virtual worlds and avatars. They can also completely blend out other users they do not want to see or interact with.

Interviewee 1 describes how they can meet friends and have social interactions from the comfort of their home: “It is very good for socializing. […] I don’t need to drive anywhere, and I can hang out with some of my best friends. […] So, I get that feeling of closeness that I can’t really get in the real world right now.”

They go on to describe how they can adjust their VR experience to their preferences and do not have to be exposed to people or situations they have difficulties coping with: “I do not need to interact with people that I don’t want to, and having that extra bit of control over the situation is just amazing for me, especially because I’m an introvert.”

Interviewee 1 highlights the social benefits of VRChat, emphasizing that it allows them to meet and interact with friends from the comfort of their home while having the feeling of as if they are sitting next to each other, watching a movie or engaging in other activities.

Additionally, they value the control VRChat offers over their social environment. They can adjust the volume of individual users, hide overly bright avatars, or block individuals they do not wish to interact with. This control is particularly beneficial for them as an introvert, as it allows them to manage social interactions in a way that suits their preferences and comfort level.

Interviewee 2 mentions how they leverage the opportunities of social VR to combat their real-life social anxiety:

“My reason for starting playing this game was the way to deal with social anxiety and just talking to people in general. I was really bad at it as I was as a young adult […] bullied in school and stuff, so VRChat was a nice fresh breath of air in terms of being social with people, […].”

They further highlight that in VRChat people get to know each other mainly based on their personality instead of their physical appearance: “[…] The fact that people don’t see you for who you actually are but see you for a personality only. […] I feel like it’s a confidence boost […].”

Interviewee 2 describes that the social interaction in VRChat is like training in a safe environment with positive feedback that encourages them to engage in social interaction:

“I’m more confident interacting with people in general just because of the amount of hours that are put into actually talking to people in this game specifically. […] you just meet strangers from across the world and you start talking to them.”

Interviewee 2 discusses how they use VRChat to cope with social anxiety. VRChat provided a way to practice social interactions in a comfortable environment, helping them to improve their social skills.

They emphasize that in VRChat, people are judged based on their personality rather than physical appearance. This aspect helps bypass insecurities related to looks, offering a confidence boost as interactions are based on the personality projected through their virtual avatar.

Additionally, they describe VRChat as a safe training ground for social interactions. The positive feedback and practice gained from engaging with strangers worldwide in VRChat have increased their confidence in real-life social interactions.

Interviewee 3 describes that their main motive for social interactions in Virtual Reality is to escape reality, meet new people, learn and practice the English language as they aren´t a native speaker: “[…] I meet a lot of new people, make some, make a lot of friends and I start to speak English. I speak bad English, but I started learning and writing and speaking more well than before […]. “

Interviewee 3 uses Virtual Reality primarily to escape from the stresses of reality and to relax. They are pleased with this choice as it allows them to meet new people, make friends, and practice their English skills.

Interviewee 4 states their social VR usage is mainly driven by the wish to see and interact with people that are too far away to visit in person and highlight the level of immersion through full body tracking in VR:

“I use VR because it helps in a way to have connections with people who are long distance or overseas and it makes you feel more closer to someone than you ever can be with just desktop games […] you can actually interact and […] you can like cuddle and like hug them and stuff […].

Gender-Identity and embodiment of diverse genders

Another question was to explore how the interviewees experienced and expressed their gender identity in social VR. We asked them about their gender identity in real life and how it matched or differed from their virtual avatar appearance and gender identification. We also inquired about their feelings of embodiment and agency in social VR, and how they were perceived and interacted with by other users. Through this set of questions, we expected to gain insights into the challenges and opportunities of fundamentally free and fluid gender expression and embodiment in social VR as well as if and how the according experience relates to their real-life identity and gender expression.

Interviewee 1 presents as gender fluid in real life and VR:

“I am 22. I am gender fluid. (in real life) […] I tend to make myself just as kind of androgynous as possible. Most of my personal avatars I typically go with the male variant but or sometimes I will use like a female with a smaller chest.”

They describe the sound of a user’s voice as the main element of identification of gender by other users in VR: “Of course some people still do use she/ her here because I do have a more feminine sounding voice as well.” And state that they were respected in their choice of diverse pronouns most times:

“But I have run into a number of times where I’ve said hey, you know, like please use like they, them or even sometimes he/ him pronouns for me. And a good number of times they will be like, yeah, of course.”

They also report that similarly to reality some people do not respect their chosen pronouns: “Of course you’ve run into some people that don’t respect that, but you’ll run into that anywhere, unfortunately.”

Interviewee 2 identifies as male and presents as female in virtual reality while using he/him pronouns and presenting as male in real life:

“I’m a he/ him. I’m a dude 100%, always been a man. I’ve never been questioning my own gender. I just like being a cute anime girl in VRChat, OK? Yes, my choice of avatar does not represent my gender and all right, it’s because guys want to be cute too, without being a woman.”

Interviewee 3 states that their pronouns and gender identification in VR changes with the Avatar they wear and the way they present in VR: “In VR I usually go with the avatar, so when I am a female, I prefer people use she. When I am a male, I prefer he, and when I am an object so like a lamp or stuff like that, it doesn’t matter.”

This differs from their real life where they represent their birth gender and use the related pronouns only: “No, it’s something I just used to do in VRChat – in real life I am not into this stuff and people call me for what is in.” (I 3)

Interviewee 4 presents as the same gender in VR as in reality: “I go by she/ her, and so that’s the same in VR as in real life.”

Experienced harassment or hate speech

Harassment – especially sexual harassment – as well as anti-Semitic, racist, transphobic hate comments or extremist content not only have harmful individual and societal consequences, which are well researched for social media, but can also lead people to avoid certain virtual platforms and spaces. Protecting users from harassment and creating an inclusive and dignity-oriented climate is also particularly important in immersive virtual environments, especially since it must be assumed that the intense experiences through embodiment and virtual reality are experienced more intensively by users than in text-based media. No reliable studies or figures are yet available on the spread of hate messages in immersive environments, partly because the platform providers (so far) – unlike the particularly large social media platforms – are not obliged to provide public and transparent information on measures against hate. Due to real-time presence, real-time verbal communication and the absence of recordings, the recording of hate messages in social VR is particularly difficult. In the interviews, respondents reported varying degrees of experience with harassment or hate speech in VR.

Interviewee 2 observes “[…] definitely a lot of racism in this game, like in a lot of unfiltered language that is very racist or just offensive to people and genders and all that stuff. It’s very offensive.” This can be encountered by users in very normal situations. In addition to racist discrimination, gender discrimination in particular is reported:

“[…] People who come out, especially online […], [saying] ‘I’m transgender or I’m into my own gender stuff,’ […]definitely don’t deserve to have random slurs thrown at them.” (I2)

Influenced by political discourse in the world, the person observes mostly hate-filled messages against the LGTBIQ community: “Hateful comments and stuff towards the entirety of LGBTQ [community]. […] Because it’s become pretty prominent outside of VR Chat as well […].” (I 2)

Interviewee 1 makes direct comparisons to social media and therefore questions whether social VR should be understood as a game or a social medium: “VR chat is more of a social media that has games in it […] It’s a social app. It´s not a game. And so unfortunately, sometimes you will stumble into the wrong groups.” (I 1)

In social VR, too, harassment and hatred can be found primarily in certain groups or spaces. The manner or characteristics to which the experienced hate messages refer are closely related to the virtual appearance as an avatar, so that the user is attacked for her avatar. However, she relates this to personal identity and does not say “[…] my avatar was attacked for being a furry’ but “I’ve been hated on for being a furry” (I 1). This speaks to a great deal of identification with the avatar and that attacks are perceived personally, rather than as avatar related. In addition, there is gender-based discrimination, “I’ve been hated on for being a female.” (I 1) and the association of both characteristics with weight-based discrimination: “I’ve been hated on for being a female furry because apparently that immediately makes you 500 pounds.” (I 1). In such situations, the user makes short work of it: “It’s just the block button and then you don’t need to talk to those people anymore.” (I 1). Interviewee 3 also reports about people “who hate the Furry community” (I 3). This raises the question of whether avatars and sub-communities in virtual realities give rise to new forms of digital hate communities that are directed against certain classes of avatar identities.

Interviewee 2 states, “I don’t think there’s really any counter speech, it’s just a straighter blocking.” The person observes different levels of sensitivity in dealing with hate speech and in using the blocking function:

“It really depends on the person who’s on the receiving end of the offensive speech […] Personally, if anyone’s doing hate speech around me, I’m the person to just block because I can’t be bothered dealing with it.” (I 2)

The interviewee 2 makes a direct comparison to social media, noting that in VR chat there is “[…] definitely not as much censorship in chat as there is on social media […] But via chat, it’s probably the worst. The difference is that you have to be unlucky enough to end up in the same room as those people, whereas with social media, everyone has access to it.”

Subsequently, the technical barrier to accessing social VR still provides some protection from being flooded with hate messages compared to old social media. At the same time, the person points out that such dark content has a more intense effect in social VR and requires more active action: “It’s just like I feel like via chat is worse in person […] But I’d say via chat is way more in your face about and then social media is.” (I 2)

Interviewee 1 compares VRChat to social media, stating that it functions more like a social network than a game. They highlight that, like social media, VRChat has different groups and communities, some of which can be hostile.

Interviewee 2 reports widespread racism, offensive language, and hate speech targeting gender and sexual orientation. They note that these issues are encountered in everyday social interactions within VRChat.

Overall, the interviewees agree that while social media and VRChat share some similarities in terms of harassment, the immersive nature of VRChat makes negative interactions feel more personal and intrusive, requiring more proactive measures to manage and mitigate.

Experiences of harassment are reported by user 1 mainly in public spaces, which she therefore avoids and meets mainly with her own circle of friends in VR: “Harassment typically comes in whenever I go to public worlds […] that’s one of the main reasons why I don’t go to public worlds anymore.” (I 1)

Most notably, the interviewee experiences the harassment in verbal form because the user has hidden other avatars by default in the settings: “It’s mostly speech, especially because I personally have everyone’s avatars turned off by default, and then I have to manually go in and show people’s avatars.” (I 1) However, there are also particular glitches related to the specific implementation of the embodiment in the metaverse. For example, offensive images can be used as avatars or high-resolution avatars can cause the hardware to crash due to overload. The user protects herself against this by disabling avatars by default:

“I used to be close friends with a guy who streamed UM music on VR chat […] He played live guitar, and it was mostly well-received. But there were disturbing avatars, like gore-themed PNGs, and crashers with particle effects that could crash even powerful computers. That’s why I keep avatars off by default and only enable them after ensuring someone seems decent.” (I 1)

In addition, there were situations where the interviewee, along with a friend, made recordings in VR for Twitch streams

“[…] Almost every stream had someone trying to crash it with a malicious avatar or spamming the N-word because they knew it was streamed on Twitch. I blocked people daily and used a shader-equipped avatar to see hidden users through walls, allowing us to block them via the menu.” (I 1)

The statements reveal the high technical level of the early VR experts, who developed various tricks to deal with unintended, sometimes malicious, interventions in the environments. This addresses key technological challenges to the goal of photorealistic real-time presences or highly authentic avatars: The technical overload of consumer hardware is predominantly not (yet) capable of this. The varying quality of technological equipment can lead to this being used to the disadvantage of people – consciously or unconsciously – with less powerful hardware – a new form of digital divide in immersive environments. For the development of innovative technologies on the one hand and the establishment of interoperability on the other, this represents a major challenge that is directly relevant to users’ sense of security and stability in immersive virtual environments.

Interviewee 3, on the other hand, reported barely noticing any harassment or hate speech. Only once had she been told to “kill me” (I 3). For the interviewee, harassment could mainly come from people close to her; she had not experienced any harassment with strangers: “No, I haven’t experienced that from strangers. They’re all nice, even in public […] I feel like I see most people just talking to each other like that. Yeah, I don’t get harassed that much.” (I 3)

Interviewee 4 reported a lot of gender-based harassment in Social VR:

“Mostly just people being like as soon as I speak because I’m a female, they’ll be like, my God, it’s a female here. What is she doing? She should be like back in the kitchen or something like that […].”

In addition to language, the avatar is also used for harassment:

“But mostly kids that usually come up and are like, oh my God, you want to see a trick and either pull out like something offensive on an avatar. Again, this is mainly a problem in public spaces. In VR, however, harassment is not as hurtful as in real life.” (I 4)

The interviewees reported varied experiences with harassment and hate speech in VRChat, highlighting significant challenges and the ways users cope with these issues. The survey reveals that harassment in VRChat is more prevalent in public spaces, prompting users to seek refuge in private groups. Verbal harassment and technical exploits are common, and users often need advanced technical skills to protect themselves. The digital divide exacerbates the issue, with less powerful hardware leaving users vulnerable. While some users report minimal harassment, gender-based harassment remains a significant problem, particularly in public spaces

Experienced political extremism

When asked about extremist or hateful political statements in VR, the interviewees hardly made any corresponding observations. While Interviewee 1 assumes that there is, because “[…] everyone has their own groups and often, from what I’ve witnessed, it stays out of the public world. Or if it’s in a public world, it gets shut down very quickly if it reaches an extremely hateful level.” . But personally, the person “didn’t get involved in anything extremely political” (I 1). However, the interviewee did notice individual statements in favor of Donald Trump that disturbed his need for calm and relaxation:

“I’ve run into people who have tried to say, Donald Trump 2024, build the wall. And I want to say that in most cases that I’ve seen, they were just bad memes. And I end up blocking those people, too, because I’m here to relax, not to think about politics.” (I 1)

Respondent 2 also argues similarly, staying away from politics in VR:

“I never played through chat to bring political views into my life, so I stayed away from anything political, OK?” While things like the Russian war on Ukraine are talked about, “Again, Ukraine, Russia, but inflation is a pretty big deal and how it affects people in different countries, things like that,” but “it was never anything super political.” (I 2)

Interviewee 3 also initially denied political content but then stated that he had contact with users in VR “[…] who are Nazis for no reason. So, the classic, yes, the classic stupid people who bring back Nazis and fascists like that. People who hate for no reason.”

Interviewee 4 also reported “Trump supporters or like fascist or extremist behavior, that kind of thing”, but not “too much of it”. Political discourse can be observed in VR by bystanders: “I’ve met people talking about it in public and they’ve been arguing amongst themselves and I’m standing here listening to it just to hear their sides”. Regarding the feeling of safety in social VR, the person describes Ripper in particular as a danger and complains that the platform does not respond enough to users’ concerns and problems.

In summary, it can be said that all respondents had corresponding experiences in connection with harassment and hate speech, albeit to varying degrees of intensity. Gender-related devaluations were mentioned particularly frequently. Verbal statements are in the foreground, but destructive uses of VR technologies are also experienced as harassment. The central way to deal with this is to block those who discriminate or harass. Measures such as reporting accounts or even criminal consequences for haters were not mentioned. The social consequences of blocking statements and behaviors perceived as offensive or discriminatory for social relationships in social VR and for social behavior in the physical world therefore require special attention in future research.

Current opportunities of protection

We asked interviewees how they utilized and evaluated the protection mechanisms that VRChat currently offers to its users. We asked them, among other things, about their awareness and usage of the VRChat Safety and Trust System, which allows users to customize their safety settings, block or mute other users, report abusive behavior, and create secure instances. Through these questions we are aiming to gain insights into the effectiveness and limitations of the protection mechanisms in VRChat and what they mean for people’s abilities around social interaction in VR.

Interviewees responded that they tend to mute and block other users on a daily basis. The people on their blocklist are mostly strangers.

Interviewee 1 explains the process of the vote kick functionality on the platform VRChat:

“So how it works is anybody is able to put in a vote kick for someone who is being loud, being, harassing, whatever the reason may be […]. You can click on the person and do vote kick and then it’ll pop up on everybody else’s screen saying a vote kick has been started against player name. Do you agree? And you can select yes, or no?”

The Interviewee also describes how the feature is not always working as intended:

“But I have seen multiple instances where everyone, well most everyone would click yes and agree for this person to be removed from the instance. And the vote would go through, but the person would still remain in there. This has been a VR chat issue for as long as I’ve been playing.” (I 1)

The Interviewee 1 mentioned how muting and blocking is a prevention of future incidents but does not actually prevent users from being exposed to initial hate or discrimination:

“[…] that’s a tool to help prevent future from that person, but it’s not gonna fully stop it before it happens. You are going to probably hear some sort of comment or see some sort of thing before you block them. That’s why I said the only foolproof way is to just not go to public worlds.”

The frequency in which Interviewee 1 is using the blocking and muting feature depends on the situations and environments they are interacting in:

“[…] In situations where I was like back with my friend who was recording, it got to the point to where almost every stream there would be somebody who would either log in with a crasher avatar and try to crash him, or would think it was the funniest thing to just say the N word over and over again knowing that he was streaming on Twitch. So back then I used to block pretty much daily, and I actually have an avatar that has a shader on it to where you can see people’s avatars, even through walls.”

Interviewee 2 states they stopped going to public worlds to avoid needing to block or mute people: “I either just mute or block people right away. If I can’t be bothered with really hateful speech, that’s what I would do, at least to strangers. If it’s with friends and stuff, then I just tell them to shut up.”

Interviewee 2 describes that they usually feel safe but ‘Internet trolls’ are the main reason for them to feel unsafe on the social platform VRChat:

“I don’t think I really feel unsafe in VRChat. I mean. There’s obviously a lot of people who are very racist specifically and offensive people that aren’t always fun to be around and listen to. […] Internet trolls are thing even in VRChat. So that’s definitely something I feel unsafe about […].”

Interview 3 mentioned that they generally feel safe even if they get harassed, but they are afraid of hackers that might potentially steal their personal data: “[…] people who like harass or stuff like that, I don’t mind too much. I can block them if they are too much, but the only things I don’t feel safe with are stalkers and hackers […].”

The Interviewee describes further that they first mute the user in question before actually blocking them: “When they get too much, I block them, but usually I just mute the people but if I see they keep going even when I say stop, I’m gonna block them. So, the fact I could block a more easier person is something I like. […]. I try to understand why this person do this stuff because the other person know what he’s doing, what they doing. But if I see there is not feedback, I just block him.” (I 3)

Interviewee 4 also describes that they first mute the user that is showing inappropriate behavior before blocking them:

“[…] if it gets too extreme, sometimes I will just block or mute the user. Sometimes I’ll mute them first, but then if they’re really trying to be annoying, even after them being muted to where I can’t hear them, I’ll just block their avatar entirely.”

The Interviewee describes how they go to their own world and only invite selected friends if they feel harassed or bothered by a user:

“And if they’re trying to like, follow me through another friend or so, since you can join off friends in other worlds, I’ll sometimes just go into my own like private world and then invite the few friends that I want.” (I 4)

Overall, while VRChat offers various protection mechanisms, their effectiveness is limited. Users adopt personal strategies, such as avoiding public spaces and using advanced blocking techniques, to enhance their safety and ensure a more positive experience in virtual environments.

Further protection suggestions and challenges

Following the previous questions about the current protection methods and how our participants use them, we inquired about the interviewee’s personal suggestions for new or improved safety features in social VR, based on their experience and insights.

Interviewee 1 describes how they like the approach and tools provided by the social platform VRChat but states that the current protection systems are still buggy and need improvement:

“[…] VRChat […] should fix their vote kick thing. […] what VRChat has done to be able to make it so easy to control, like who you’re seeing, what you’re seeing, and being able to block people if needed is super helpful […].”

Interview 2 is thinking about censorship of spoken word compared to text and how censorship could affect the way people socialize in VR:

“I feel like it’s both good and bad that there’s not a lot of censorship. […] You would need to do voice recognition on everything. Probably that would be a big decision. That would probably make the game pretty unplayable for a lot of people because that amount of information your game suddenly needs to process.”

The Interviewee continues to point out that the existing moderation features of VRChat are either not working or not yet providing the desired outcome:

“[…] VRChat has never been really good at what would it get modern, moderate the game itself […] Getting reported, that would definitely help the censorship and VRChat and this general moderating people.” (I 2)

Interviewee 2 also suggests a ranking and trust system:

“[…] it’s basically like a kind of record of your behavior in a way that you’re suggesting where you can see okay this person, I didn’t know them before. And so, they come in the room and then you’re going to basically see how often they have been reported […].”

As well as an ID verification process, primarily to keep interactions with minors safe: “[…] as an example, if you’re below the age of 18, you should have a way to check box then. So that if you’re in a space within with somebody minor, there is no way to hide.” (I 2)

Interviewee 3 is suggesting a warning system to support awareness of users that are on their blocklist: “[…] you want to get warned if a person that you have blocked is in a certain world, so you want to kind of have a warning about that, that you don’t join the same instance.”

Interview 4 is pointing out concerns around anonymity as well as personal data and asset protection:

“I wish there was another way besides using a VPN changer or something, but something to change your IP address. Because there’s a lot of people in VR who can find that information about you and take that information, know your home address and everything else about you. And that is like really uncomfortable, and I wish people didn’t know how to do that […].”

Blocking as a personal and structural security strategy

We specifically looked at how the interviewees used and perceived the blocking system in VRChat. We asked them about the number and nature of people they had blocked, the reasons and outcomes of blocking, and the challenges and drawbacks of blocking. Through this further investigation of this popular, but profoundly different to real life, protection mechanic we aim to gain insights into the role and impact of blocking as a personal and structural security strategy in social VR.

Interviewee 1 describes how the blocking feature affects the visibility of avatars of users:

“The biggest thing is that if you block someone, it blocks it for you, but not for everyone else in the instance. So, I’ve run into things where I blocked someone and then like the poor person standing next to me didn’t want to block them for whatever reason. And then they started harassing the person next to me trying to get them to talk to me […].”

And about their view of potentially getting blocked by other users: “I mean, I don’t know if I’ve ever been blocked. It doesn’t tell you like when you do. Honestly, it doesn’t make a difference to me.”

Interviewee 1 goes on to describe who and why they block other users:

“I don’t know how many are on my block list and I’m also not sure how to check. I can say probably like 2 to 300. […] most of the people on the block list are people who came up immediately saying slurs or people with crashed your avatars or just small children that were being harassing and should not be on VR.”

Interviewee 2 states the frequent presence of ‘trolls’:

“I know there was a way to see your full block list and your full mute list, but I don’t think that I don’t think that API is running anymore. […] but it’s definitely over 500. They’re usually strangers who are being toxic in public spaces. […] or just straight up trolls.”

Interviewee 4 has comparatively few users on their blocklist:

“I try not to block as many people. So, 22, yeah. […] probably one of them was someone I knew before, but most of the rest of them are strangers. It’s people just coming up to you, screaming in their mic or doing something irritating like and just really trying to get at you. It’s just random people.”

The Interviewee also describes negative consequences that arise from the blocking feature:

“[…] seeing it from another group standpoint and seeing a friend of a friend block somebody, there is usually an issue with that. Because then other people in the group who hang out with that person that’s blocked, they’ll be like, well, we can’t hang out and do events or group activities because you have them blocked. […] maybe that person is trying to like contact you in another way to try to get them unblocked and be like, hey, add me back, blah blah blah. […] I’ll try to give them a warning, but honestly, if they’re not listening to me, it’s an immediate block.” (I 4)

Interview 2 sees blocking in an unemotional way:

“I have been blocked before and it’s never really been something that I cared about to be honest, it hasn’t really benefited me or affected me whatsoever […] and adds that people use the blocking feature merely to have a better view at the mirror in the virtual environment […] cause a lot of people will block you because that’s a mirror, right? This guys in the way just to block him and sit where he sat stuff like that happens a lot in public spaces.” (I 2)

Interview 3 describes how they got blocked by their ex-partner and that there was no confusion or irritation around this: “No, Like I say, I don’t get blocked, but I get gossiped. […] But get blocked maybe one time from my ex, Yeah, and. But there was nothing like specifically weird about that situation that I you could remember.”

Interview 4 reports about their experiences with blocking as something that can be confusing and even hurtful:

“So, I have gotten blocked before [..] for absolutely no reason at all. I know I didn’t do anything or say anything hurtful. […] And when I get blocked, if it’s from a random person […] sometimes I’ll just laugh it off and I’ll be like, OK, whatever. They have their reason. Now, if it’s someone that’s a friend who has blocked me before, I’ll feel kind of hurt and I’ll be like, well, what did I do? What did I do to hurt you? What can I do to make this better?”

Overall, the Interviewees describe blocking is a common tool for managing harassment in VRChat, but its effectiveness is limited to the user who initiates the block. The feature can cause social complications and emotional reactions, depending on the context and relationship between users.

Potential legal consequences of actions in social VR

An important point of our study was to examine the legal implications of actions in social VR and specifically on VRChat. This is a difficult topic as anonymity is still a very high priority for a majority of users and currently there is no social VR platform with a legal identity check. We asked them about their awareness and opinions on the existing and emerging laws that could apply to social VR platforms to gain insights into the challenges and opportunities of legal governance in social VR. We also inquired about their views on the ethical and moral concerns that arise from social VR such as identity, impersonation, consent and harassment.

Interviewee 1 suggests that there should be certain crimes that are relevant to be prosecuted in the metaverse and ruled out at the user’s physical location:

“I believe that it would depend on what criminal activity I would also for example, say you have a restraining order against someone and that someone uses VR to try to bypass that restraining order. I do think that that should be a violation, and they should be charged via whatever court gave that restraining order.” (I 1)

They make clear that theft of Avatars and their 3D files is a major issue that is not prosecuted currently: “Now when it comes to the stealing avatars […] that is a breach of copyright. You are stealing somebody’s intellectual property.” (I 1)

Interviewee 2 sees the safety of minors and presence of pedophiles on the social VR platform VRChat as the most pressing issues to be tackled on platform and policy side:

“Yes, there are so many pedophiles in this game it is actually ridiculous. […] And they will kind of prowl upon anyone who has some sort of need or wanting for attention, regardless of age. And there are a lot of really social, angsty teenagers in this game aged like 13 to 17, […] some of these guys or girls are really like just sinking their teeth into because they can give them attention that they need in exchange for […] certain types of like child pornography and like abuse towards minors happening on the platform.”

Interviewee 3 suggests that crimes in VR should be prosecuted as well as security recordings of the virtual environments while users interact: “That’s if he’s up and online, that doesn’t mean he’s not a crime. […] There is need a video of the world or two hours of the world. So, like a security Cam basically.”

Interviewee 4 mentions the responsibility of parents to watch the media consumption of their kids and suggests that crimes involving drugs and abuse of minors are most urgent to be addressed:

“[…] pedophiles being on the game and going to young children and being like, hey, well, your parents aren’t watching you playing this game […]. But there’ll be people in this meeting like, well, your mom or dad isn’t watching you. You have this headset on. I’ll be your new mom or dad. And I’ll take care of you, and I’ll buy you whatever you want. You just have to listen to me. And they will do that. […] And that’s how kids fall into their trap.”

The interviewees highlighting issues such as harassment, theft of avatars, and the presence of pedophiles on VRChat. They emphasize the need for legal accountability for criminal activities in VR and stress the importance of protecting minors and managing intellectual property rights within the platform.

What should policy makers, regulators or designers know about VR experiences like VRChat?

The final question of our study was to inquire about recommendations and insights for policy makers, regulators or designers who are interested in or responsible for social vr experiences like VRChat. We asked participants about their expectations and suggestions for improving the quality, safety and accessibility of social VR platforms.

Interviewee 1 emphasizing the importance of the blocking feature which is seen as most helpful to prevent continued harassment: “[…] I use the avatar blocked by default just in case if somebody has an avatar that I really don’t want to see. Um. And I think if more VR applications implemented something like that as well, that would just be huge.” (I 1)

Interviewee 2 warns about the safety of minors on social VR platforms as well as the safety of adults mistakenly interacting with minors: “But there are a lot of pedophiles playing this game, sadly. And that I feel like needs to be prosecuted by the police. […] There should be some kind of punishment, but it’s next to impossible to actually prosecute people online.”

Interview 3 refers to the previously bespoken security recording as well as ID verification to enable prosecution for online crimes: “„Because I think we’re definitely a lot of people are looking at is something like ID verification […] if the metaverse is going to be a real second kind of world […] It’s probably also a matter of like, does this person need to verify themselves, right?“

They further point out the importance of no cost associated with ID verification due to access and inclusion of financially weaker countries and individuals: “[…] Maybe there is someone who is really poor and they tried to escape from their daily life and got to have some fun. If you put another thing so this person has to pay, I think it’s not good. So, like a verification. A free verification.” ( I 3)

Interviewee 4 points out the importance of policy makers and regulators having a first-hand experience with social VR platforms as a basis to make more informed decisions as well as staying updated on the needs and experiences of long-term users: “ […] a lot of them, when they hear about VRChat, they don’t know anything about it. […] I just wish that they can at least have a little bit of you and put the headset on, so they know what’s going on in the world and understand it a bit more before making their final decisions.”

They further argue that the level of immersion heightens the urgency for regulations and safety on social VR platforms: “I feel like they when I’m putting on this headset, it isn’t just a game. At the end of the day, there’s people behind the headset […] who have actual feelings and thoughts.” (I 4)

Interviewee 4 also urges that unaccompanied time in the headset is risky for children and should be monitored by adults:

“[…] I want more people to know that parents and just whatever adult is watching your child needs to have better like moderation over the headset. […] Because they’re the age limit for VR and there’s kids younger than that are entering this game […]. And I really blame the parents for that.”

Interviewees in the study recommend policy makers and platform designers enhance safety features in social VR, such as implementing effective vote kick systems, improving minor protection, and considering ID verification for user accountability. They emphasize the need for first-hand experience by regulators to better understand the platform’s dynamics and stress the importance of making security measures accessible to all users, regardless of their financial situation. Additionally, they highlight the necessity of parental supervision to safeguard children from inappropriate content and interactions.

Additional comments from Interviewees

In conclusion Interviewee 1 comes back to the unique possibilities of VR to personalize the user experience and enable social interactions for individuals that have difficulties socializing in real life: “[…] I am an introvert […] I started streaming around 2020 […] those things have really helped me socialize more. I think it’s usually because of the control aspect of it. […] And if force comes to absolute worse and I get too overwhelmed, I can just leave.”

Interviewee 2 also emphasizes his experience of practicing social interaction in a safe space: “Social interaction was my reason for getting in VR, yes. But I feel like I’ve grown enough as a person since starting the game, starting VRChat and to interact with real people as well without being too insecure about myself or being super angsty.”

Interviewee 4 points out that the age rating for social VR spaces should be higher as the technology is not comparable to other games and the degree of immersion could be a safety risk for minors:

“Every game is so harmful to the mind and body or whatever. […] The only way you’re going to understand [this game] is by the voice of the people and by experiencing yourself how immersive such a VR environment and VR situations in VR can be, right? […] you can buy suits to feel touch in VR […] like you can touch people over the Internet. You really want to have like a more or less regulated metaverse where you can try these things in a safe context at least. It should at least be 18 plus, if not even 21.” (I 4)

The Interviewees highlight the unique benefits of VR for improving social interaction skills, especially for introverts, due to its controlled environment and customizable features. They also stress the need for higher age ratings for social VR spaces due to the heightened immersion and potential risks, suggesting that regulations should adapt to the advanced nature of VR technology.

Discussion of the results

The study is limited by the relatively small sample size of only four participants. Furthermore, the sample is restricted in terms of age groups and genders, with representation from male, female, and diverse gender identities. Geographically, the participants are predominantly from the United States and Europe, specifically from Missouri, Texas, Denmark, and Italy, which limits the generalizability of the findings.

Summary of key findings

Experiencing and expressing personalities unlinked from their material bodies serves as an opportunity to experiment with and learn about social interaction. Friendships, Relationships and Smalltalk with strangers are embraced in VR for various reasons like users’ location or social anxiety.

Anonymity in online spaces has significant positive and negative impacts on people’s behavior.

Mechanics of protection are still in development, buggy and not the main priorities for platforms.

The most pressing area of improvement is the safety of minors through identity verification of users.

Relevant concerns include limited knowledge from policy, law and decision makers of the various immersive aspects of social VR. Users emphasize the incomparable immersive nature of VR and the resulting social aspects like friendships, relationships and personal development of interacting in digital environments for large amounts of time.

Opportunities and challenges of social VR

Opportunities: Socializing with likeminded people worldwide. Logistics and environmental impact are mostly independent from users’ location. Learning and collaboration are accelerated through high levels of immersion. Creative expression through interactive tools as well as relevant audiences are widely accessible.

Challenges: Fraud and harassment by incognito users are widespread and frequent in online social spaces. The immersive quality of VR makes harassment particularly dangerous. Anonymity and missing age verification enable age-inappropriate behavior and abuse of minors.

Subsequent research questions

Socializing in VR compared to material reality (material as in our regular reality) and the effects on users of different age groups.

The surveys clearly showed that experiencing and expressing personalities unlinked from their material bodies in VR offers users the chance to experiment with and learn about social interaction. Many embrace friendships, relationships, and small talk with strangers due to factors such as their location or social anxiety. While anonymity in online spaces has significant positive and negative impacts on behavior, protective mechanics are still under development, often buggy, and not prioritized by platforms. The most pressing improvement needed is the safety of minors through identity verification. Policy, law, and decision makers often lack comprehensive knowledge of the immersive aspects of social VR, which users emphasize as having an unparalleled immersive nature that fosters friendships, relationships, and personal development through extensive interaction in digital environments.

Social VR presents opportunities such as socializing with like-minded people worldwide, logistical and environmental independence from users’ locations, accelerated learning and collaboration through high levels of immersion, and creative expression through interactive tools and accessible audiences. However, it also faces challenges including widespread fraud and harassment by incognito users, the particularly dangerous nature of harassment due to VR’s immersive quality, and the risks posed by anonymity and lack of age verification, which enable age-inappropriate behavior and abuse of minors. Subsequent research could explore socializing in VR compared to material reality and its effects on users across different age groups.

References

- Banakou, D., Groten, R., & Slater, M. (2013). Illusory ownership of a virtual child body causes overestimation of object sizes and implicit attitude changes. Proceedings of the National Academy of Sciences, 110(31), 12846–12851. https://doi.org/10.1073/pnas.1306779110

- Deighan, M. T., Ayobi, A., & O’Kane, A. A. (2023). Social virtual reality as a mental health tool: How people use VRChat to support social connectedness and wellbeing. Proceedings of the ACM CHI Conference on Human Factors in Computing Systems.

- Hinduja, S., & Patchin, J. W. (2024). Metaverse risks and harms among US youth: Experiences, gender differences, and prevention and response measures. New Media & Society, 26(1), 1–22. https://doi.org/10.1177/14614448241284413

- LaValle, S. M. (2020). Virtual reality. Cambridge University Press.

- Hinduja, Sameer; Patchin, Justin W. (2024): Metaverse risks and harms among US youth: Experiences, gender differences, and prevention and response measures. In: New Media & Society, 1–22. DOI: 10.1177/14614448241284413.

- LaVelle, Steven (2020): Virtual Reality. Cambridge University Press.

- Quent, M. (2023). Demokratische Kultur und das nächste Internet: Chancen und Risiken virtueller immersiver Erfahrungsräume im Metaverse. In Institut für Demokratie und Zivilgesellschaft (Ed.), Wissen schafft Demokratie: Schwerpunkt Netzkulturen und Plattformpolitiken (Vol. 14, pp. 30–43). Jena, Germany.

- Sabri, N., Chen, B., Teoh, A., Dow, S. P., Vaccaro, K., & ElSherief, M. (2023). Challenges of moderating social virtual reality. Proceedings of the ACM CHI Conference on Human Factors in Computing Systems.

- Stockselius, C. (2023). Social interaction in virtual reality: Users’ experience of social interaction in the game VRChat (Master’s thesis, University of Skövde). University of Skövde.

- Vogelgesang, A. (2024). Social VR Platform Design, User Creativity and Aesthetic Governance. Project Immersive Democracy, https://metaverse-forschung.de/en/2024/02/15/social-vr-platform-design-user-creativity-and-aesthetic-governance/.